课程介绍

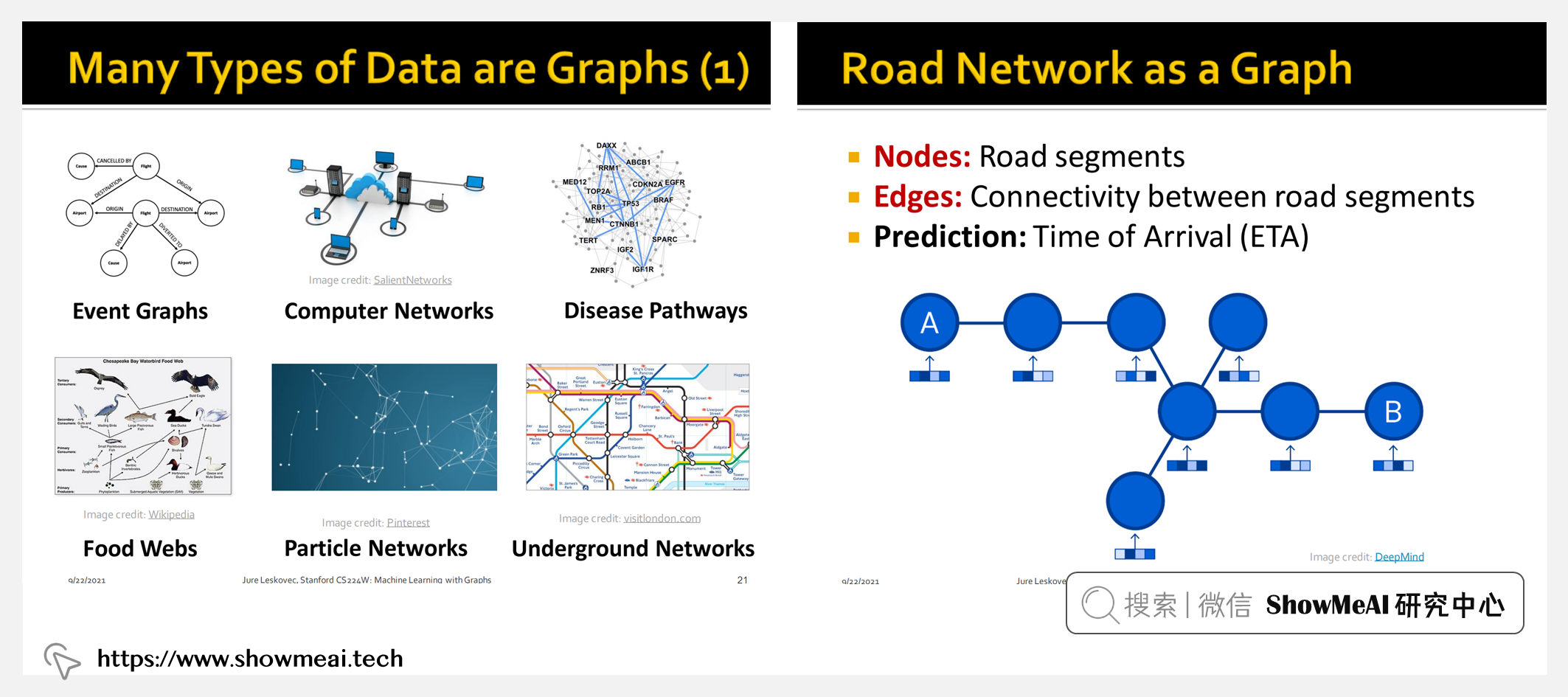

图是一种强大的数据结构,可以用于建模许多真实世界的场景,图能够对样本之间的关系信息进行建模。但是真实图的数据量庞大,动辄上亿节点、而且内部拓扑结构复杂,很难将传统的图分析方法如最短路径、DFS、BFS、PageRank 等算法应用到这些任务上。因此有研究者提出将机器学习方法和图数据结合起来,即图机器学习,这逐渐成为近年来机器学习中的一股热潮,特别是图神经网络(GNN)。

CS224W 是顶级院校斯坦福出品的图机器学习方向专业课程,对于graph方向的数据挖掘和机器学习(神经网络)有全面的知识覆盖和很高的权威度。如果大家想学习非结构化的图数据上的各类算法,本课程是最适合的课程之一。

课程主讲人 Jure Leskovec 是斯坦福大学计算机科学副教授,也是图表示学习方法 node2vec 和 GraphSAGE 的作者之一。

他主要的研究兴趣是社会信息网络的挖掘和建模等,特别是针对大规模数据、网络和媒体数据。多次在 Nature、NeurIPS、KDD、ICML 等期刊和学术会议上发表论文,并两次获得 KDD 时间检验奖。

课程主题

本课程着重于分析海量图形所面临的计算、算法和建模挑战,通过研究底层图结构及其特征,向学生介绍机器学习技术、数据挖掘工具。课程涉及的主题包括:

- Machine Learning for Graphs(基于图的机器学习)

- Traditional Methods for ML on Graphs(图数据上的传统方法)

- Node Embeddings(节点嵌入)

- Link Analysis: PageRank(PageRank)

- Label Propagation for Node Classification(用于节点分类的标签传播)

- Graph Neural Networks(图神经网络)

- Knowledge Graph Embeddings(知识图谱嵌入)

- Reasoning over Knowledge Graphs(基于知识图的推理)

- Frequent Subgraph Mining with GNNs(使用GNN进行频繁子图挖掘)

- Community Structure in Networks(网络中的社区结构)

- Traditional Generative Models for Graphs(图数据的传统生成模型)

- Deep Generative Models for Graphs(图数据的深度生成模型)

- Advanced Topics on GNNs(GNN 进阶专题)

- Scaling Up GNNs(大规模GNN)

- Guest Lecture: GNNs for Computational Biology(GNNs在计算生物学的应用)

- Guest Lecture: Industrial Applications of GNNs(GNNs的工业应用)

- GNNs for Science(用于科学的 GNN)

课程资料 | 下载

|

扫描上方图片二维码,关注公众号并回复关键字 🎯『CS224W』,就可以获取整理完整的资料合辑啦!当然也可以点击 🎯 这里 查看更多课程的资料获取方式!

ShowMeAI 对课程资料进行了梳理,整理成这份完备且清晰的资料包:

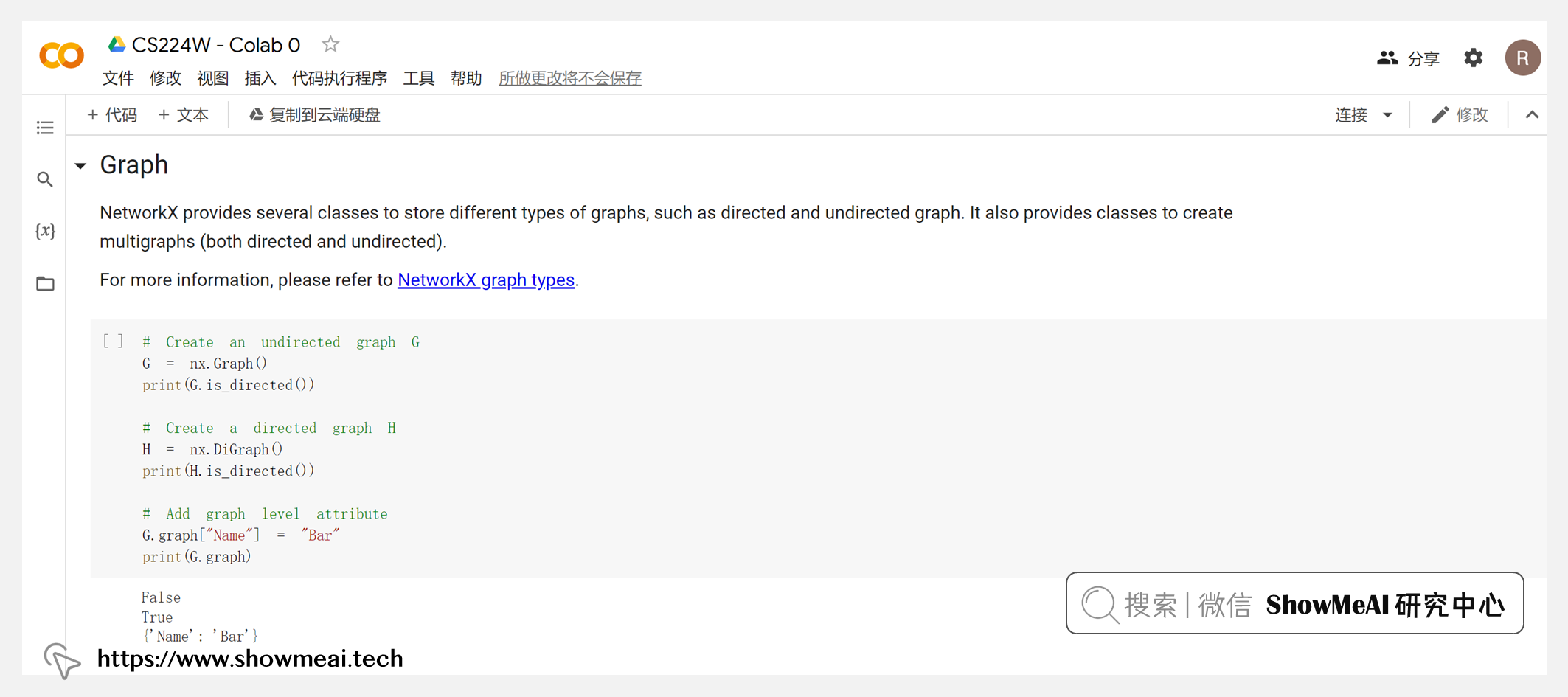

- 📚 课件(PDF)。Lecture 1~20所有章节,图文结合的呈现,对于理解很有帮助。- 📚 代码及作业参考解答(.ipynb)。Colab 0~4代码,Homework 0~3作业答案。- 📚 拓展阅读 & 知识图谱资源大全。课程推荐的相关学习资源、清单。

课程视频 | B站

ShowMeAI 将视频上传至B站,并增加了中英双语字幕,以提供更加友好的学习体验。点击页面视频,可以进行预览。推荐前往 👆 B站 观看完整课程视频哦!

本门课程,ShowMeAI 将部分章节进行了切分,按照主题形成更短小的视频片段,便于按照标题进行更快速的检索。切分后的视频清单列写在这里

- 注意:章节名术语较多,未作中文翻译

| 序号 | 视频章节 | 视频清单 |

|---|---|---|

| L1 | L1.1 | Why Graphs |

| L2 | L1.2 | Applications of Graph ML |

| L3 | L1.3 | Choice of Graph Representation |

| L4 | L2.1 | Traditional Feature-based Methods- Node |

| L5 | L2.2 | Traditional Feature-based Methods- Link |

| L6 | L2.3 | Traditional Feature-based Methods_ Graph |

| L7 | L3.1 | Node Embeddings |

| L8 | L3.2 | Random Walk Approaches for Node Embeddings |

| L9 | L3.3 | Embedding Entire Graphs |

| L10 | L4.1 | PageRank |

| L11 | L4.2 | PageRankHow to Solve |

| L12 | L4.3 | Random Walk with Restarts |

| L13 | L4.4 | Matrix Factorizing and Node Embeddings |

| L14 | L5.1 | Message passing and Node Classification |

| L15 | L5.2 | Relational and Iterative Classification |

| L16 | L5.3 | Collective Classification |

| L17 | L6.1 | Graph Neural Networks Introduction |

| L18 | L6.2 | Basics of Deep Learning |

| L19 | L6.3 | Deep Learning for Graphs |

| L20 | L7.1 | A General Perspective on GNN |

| L21 | L7.2 | A Single Layer of a GNN |

| L22 | L7.3 | Stacking layers of a GNN |

| L23 | L8.1 | Graph Augmentation for GNNs |

| L24 | L8.2 | Training Graph Neural Networks |

| L25 | L8.3 | Setting up GNN Prediction Tasks |

| L26 | L9.1 | How Expressive are Graph Neural Networks |

| L27 | L9.2 | Designing the Most Powerful GNNs |

| L28 | L10.1 | Heterogeneous & Knowledge Graph Embedding |

| L29 | L10.2 | Knowledge Graphs KG Completion |

| L30 | L10.3 | Knowledge Graph Completion |

| L31 | L11.1 | Reasoning in Knowledge Graphs |

| L32 | L11.2 | Answering Predictive Queries |

| L33 | L11.3 | Query2box Reasoning over KGs |

| L34 | L12.1 | Fast Neural Subgraph Matching & Counting |

| L35 | L12.2 | Neural Subgraph Matching |

| L36 | L12.3 | Finding Frequent Subgraphs |

| L37 | L13.1 | Community Detection in Networks |

| L38 | L13.2 | Network Communities |

| L39 | L13.3 | Louvain Algorithm |

| L40 | L13.4 | Detecting Overlapping Communities |

| L41 | L14.1 | Generative Models for Graphs |

| L42 | L14.2 | Erdos Renyi Random Graphs |

| L43 | L14.3 | The Small World Model |

| L44 | L14.4 | Kronecker Graph Model |

| L45 | L15.1 | Deep Generative Models for Graphs |

| L46 | L15.2 | Graph RNN Generating Realistic Graphs |

| L47 | L15.3 | Scaling Up & Evaluating Graph Gen |

| L48 | L15.4 | Application of Deep Graph Generative |

| L49 | L16.1 | Limitations of Graph Neural Networks |

| L50 | L16.2 | Position aware Graph Neural Networks |

| L51 | L16.3 | Identity-Aware Graph Neural Networks |

| L52 | L16.4 | Robustness of Graph Neural Networks |

| L53 | L17.1 | Scaling Up Graph Neural Networks to Large Graphs |

| L54 | L17.2 | GraphSAGE Neighbor Sampling |

| L55 | L17.3 | Cluster GCN Scaling up GNNs |

| L56 | L17.4 | Scaling up by Simplifying GNNs |

| L57 | L18 | GNNs in Computational Biology |

| L58 | L19.1 | Pre Training Graph Neural Networks |

| L59 | L19.2 | Hyperbolic Graph Embeddings |

| L60 | L19.3 | Design Space of Graph Neural Networks |

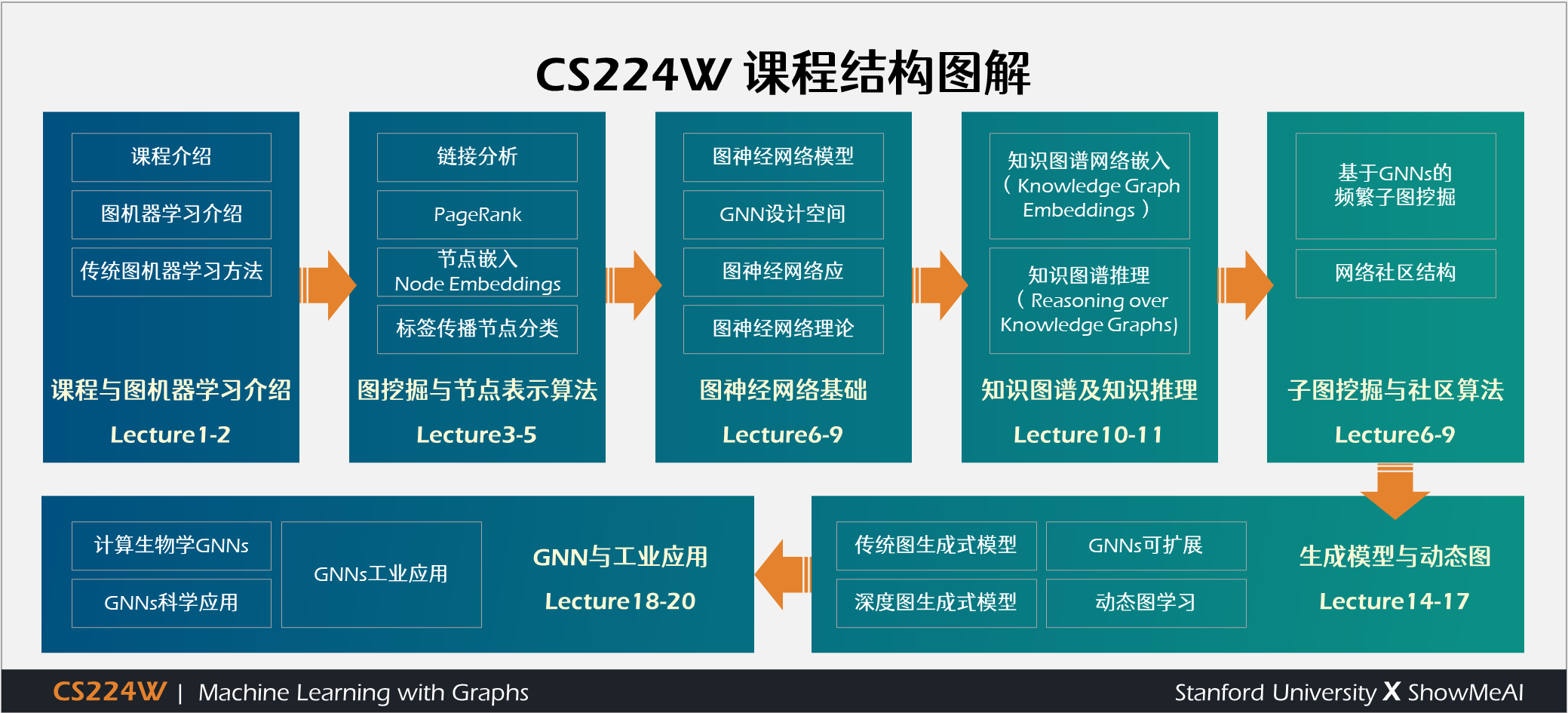

根据视频内容整理的这份『CS224W 课程结构图解』,展示了内容要点及其逻辑关系,超级直观!相信对构建 Whole Picture 特别有帮助~

学习建议

- 这门课程是独立的。

- 单个Topic容易理解,但课程涉及到了很多Topic,这就导致了课程难度的增加。

- 学生应具备以下背景:

- 具备基本的计算机科学原理知识,足以编写合理的非琐碎计算机程序

- 熟悉基本的概率理论和线性代数

- 熟悉机器学习、算法与图论的基本知识

- 课程前几周的复习课将概述预期的背景

更多技术与课程清单 | 点击查看详细课程